Monday, February 28, 2005

A Marketing Tool, A Game, Or Both?

The first advertisement appeared in USA Today a week ago, right on schedule.

People from around the world had stayed up all night waiting for it, talking in chat rooms and online forums. It had to be a clue, they thought. Everything before it had been a clue.

"LOST. The Cube," read the ad, posted at the top of the paper's "Notices" section. "Reward Offered. Not only an object of great significance to the city but also a technological wonder."

The cryptic notice, along with several subsequent ads in The New York Sun, The Times of London and Monday's Sydney Daily Telegraph, are the first tangible signs of a mystery called "Perplex City" beginning to unfold online.

It is the latest well-funded entry in a young medium called "alternate-reality gaming"--an obsession-inspiring genre that blends real-life treasure hunting, interactive storytelling, video games and online community and may, incidentally, be one of the most powerful guerrilla marketing mechanisms ever invented.

These games are intensely complicated series of puzzles involving coded Web sites, real-world clues like the newspaper advertisements, phone calls in the middle of the night from game characters and more. That blend of real-world activities and a dramatic storyline has proven irresistible to many.

The Real Hotel Rwanda

The Hôtel des Mille Collines has managed to endure the traumatic events of 1994, depicted so forcefully in the movie "Hotel Rwanda," but much like Rwanda as a whole, it cannot shake the memory of the killing frenzy that took place just outside its leafy grounds and that left 800,000 or more ethnic Tutsis and moderate Hutus dead.

Here, far from the glitter of Hollywood and the fanfare of the Academy Awards, there are no physical reminders of those awful events. No plaque honors the bravery that occurred here. No memorial remembers the many Rwandans who did not manage to make it to safety inside.

The hotel, which was owned by the now bankrupt Belgian airline company Sabena, is on the auction block, and the thinking is that potential buyers are more interested in revamping the 32-year-old property for the future than in dwelling too much on its past.

Saturday, February 26, 2005

All in the Brain?

By one estimate, 700 new products are introduced every day. Last year, 26,893 new food and household products materialized on store shelves around the world, including 115 deodorants, 187 breakfast cereals and 303 women's fragrances. In all, 2 million brands vie for attention.

To find profit in so many similar items, marketers attempt to brand a product on a buyer's mind. Such efforts put the average American adult in the crosshairs of as many as 3,000 advertising messages a day - five times more than two decades ago.

Children are exposed to 40,000 commercials every year. By the age of 18 months, they can recognize logos. By 10, they have memorized 300 to 400 brands, according to Boston College sociologist Juliet B. Schor. The average adult can recognize thousands.

"We are embedded in an enormous sea of cultural messages, the neural influences of which we poorly understand," said neuroscientist Read Montague, director of the Human Neuroimaging Laboratory at Baylor College of Medicine in Houston. "We don't understand the way in which messages can gain control over our behavior."

Messages, of course, are culturally constituted symbols that can be interpreted differently. Greeting someone with an extended toungue and feet stamping might be offensive to many, but constitutes "good manners" to the Maori and others. These are physical behaviors which get intepreted differently by cultures, gender, age groups, and so on. I can understand why a biologist would be keenly interested in how the brain reacts to stimuli (i.e., marketing messages), or could even change brain functions as a result, but lacking a larger social and cultural context in which to situate these findings places this research in the old crutch of biological reductionism, which itself is under attack from within the field.

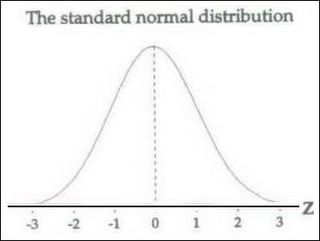

But apart from that, how many people are we extrapolating from? Representative samples are the keystone to understanding social science, given the inherent problems in sampling (think of the exit poll debacle last November) and need to adequately capture variance in a small subset of a much larger social entity. "Variance is inversely proportional to sample size," goes the old statistical dictum, meaning that if you mixed an equal amount of black and white marbles together, then scooped up a sample with both hands, you will get nearer to a 50/50 count than with a small handfull (more likely all black or all white). But if you are a reductionist, there is little need for such research design protocols. So, what size are we talking about here?

After analyzing test data from 21 men and women, Quartz and Asp discovered that consumer products triggered distinctive brain patterns that allowed them to classify people in broad psychological categories.

At one extreme were people whose brains responded intensely to "cool" products and celebrities with bursts of activity in Brodmann's area 10 - but reacted not at all to the "uncool" displays.

The scientists dubbed these people "cool fools," likely to be impulsive or compulsive shoppers.

At the other extreme were people whose brains reacted only to the unstylish items, a pattern that fits well with people who tend to be anxious, apprehensive or neurotic, Quartz said.

The reaction in both sets of brains was intense. The brains reflexively sought to fulfill desires or avoid humiliation.

Twenty-one. Call me cynical, call me an angry fellow, but I am more than a bit skeptical that these sample sizes will capture the inherent variation that we see in most biological and social structures. And, like many other university-based experiments, will future researchers control for variables such as gender, self-reported race, educational levels, levels of sociability, etc, or will the "representative sample" constitute freshman and sophomore students, like many behavioral economic experiments? Finding two "broad psychological categories" with n=21 should not really constitute an meaningfully innovative discovery, given the following:

The answer to almost everything.

I Can Read, I Can Spell

Partison Social SecurityRhetoric Disputed Democrats and Republicans are twisting facts to back up claims that Social Security is going broke or on solid ground.

– Jim VandeHei and Jonathan Weisman

UPDATE 10:40 AM Pacific

Rats! Some scheming editor has now changed the headline to grammatically correct English, taking away from the fun.

Experts Poke Holes in Partisan Social Security Rhetoric Democrats and Republicans are twisting facts to back up claims that Social Security is going broke or on solid ground.

– Jim VandeHei and Jonathan Weisman

The Past Through Neo-Colored Glasses

In England right-wing historians are portraying the last lion as a drunk, a dilettante, an incorrigible bungler who squandered the opportunity to cut a separate peace with Hitler that would have preserved the British Empire. On the American right, by contrast, Churchill idolatry has reached its finest hour. George W. Bush, who has said ''I loved Churchill's stand on principle,'' installed a bronze bust of him in the Oval Office after becoming president. On Jan. 21, 2005, Bush issued a letter with ''greetings to all those observing the 40th anniversary of the passing of Sir Winston Churchill.'' The Weekly Standard named Churchill ''Man of the Century.'' So did the columnist Charles Krauthammer, who in December 2002 delivered the third annual Churchill Dinner speech sponsored by conservative Hillsdale College; its president, Larry P. Arnn, also happens to belong to the International Churchill Society. William J. Luti, a leading neoconservative in the Pentagon, recently told me, ''Churchill was the first neocon.'' Apart from Michael Lind writing in the British magazine The Spectator, however, the Churchill phenomenon has received scant attention. Yet to a remarkable extent, the neoconservative establishment is claiming Churchill (who has just had a museum dedicated to him in London) as a founding father.

Friday, February 25, 2005

Another Round of Omission?

Ordinarily, revelations that a former male prostitute, using an alias (Jeff Gannon) and working for a phony news organization, was ushered into the White House -- without undergoing a full-blown security background check -- in order to pose softball questions to administration officials would qualify as news by any recent Beltway standard. Yet as of Thursday, ABC News, which produces "Good Morning America," "World News Tonight With Peter Jennings," "Nightline," "This Week," "20/20" and "Primetime Live," has not reported one word about the three-week-running scandal. Neither has CBS News ("The Early Show," "The CBS Evening News," "60 Minutes," "60 Minutes Wednesday" and "Face the Nation"). NBC and its entire family of morning, evening and weekend news programs have addressed the story only three times. Asked about the lack of coverage, a spokesperson for ABC did not return calls seeking comment, while a CBS spokeswoman said executives were unavailable to discuss the network's coverage.

A Long, Strange Trip

A British student has an American dream - and it involves going illegal whale-hunting in landlocked Utah.

This July, Richard Smith, 23, and Luke Bateman, 20, both from Cornwall, are planning to travel 18,000 miles across the land of the free while breaking as many of its ridiculous and arcane local laws as possible.

Smith told the Western Morning News that the pair will risk being arrested for falling asleep in a cheese factory in South Dakota and playing golf in the streets of Albany, New York. In Jonesborough, Georgia, they will attempt to drive local police mad by illegally saying "oh boy". Let's hope Downing Street is braced for the diplomatic storm.

Smith, from Portreath, says he got his inspiration from a board game featuring details of a law that forbids widows in Florida from going parachuting on Sundays. Further research showed the US was not lacking in oddball legislation.

The pair plan to break around 40 strange state and town laws as they cross 26 states. They will start in California, where it is illegal to ride a bike in a swimming pool, and end in Connecticut, in the east, where it is illegal to cross the road while walking on your hands.

It may not surprise you to learn that Smith is a journalism student who is in discussions with a book publisher, and he plans to film the adventure in case television producers come running. His plans follow in the crazy caper tradition of Dave Gorman and Tony Hawks.

The Western Morning Mail reports that Smith has a knack for this kind of thing. Last year, he drove through the night to Normandy to attend the 60th anniversary of the D-day landings because someone bet him £20 that he would not do it.

Asked whether he was worried about imprisonment on his US mission, Smith, who studies at Cornwall College Camborne, said: "I think there's more chance I will get arrested for the way I break the laws than for breaking the laws themselves. Who knows, there might actually be a good reason for their existence."

Let's hope those crazy guys at the Department of Homeland Security see the funny side.

Thursday, February 24, 2005

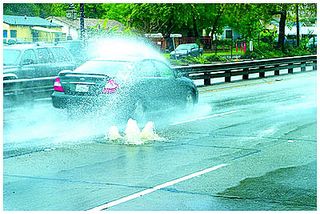

Wetter Than Seattle!

No more of this for almost a week!

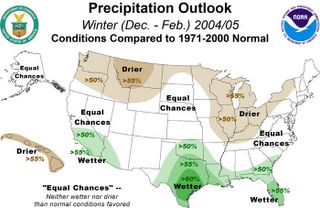

The sun has finally reappeared in SoCal today, so we get almost a week to dry out before the next storm system wades ashore from the Pacific. The picture above is on the 110 Freeway in Highland Park - the water is so full in the storm drain system that it is spraying up from manhole covers in the middle of the road, causing for some nice traffic jams in a place where you don't expect to see a fountain. This led me to wonder what the experts were thinking back in 2004 about this winter's rain totals. A quick trip to the NOAA site led me to this prediction from October 6, 2004:

The precipitation outlook calls for wetter-than-average conditions in parts of California, the extreme Southwest and across the Southern U.S.—from Texas to Florida. Drier-than-average conditions are expected in the Midwest, northern Plains and Pacific Northwest.

The winter outlook indicates some improvement in drought conditions in the West, but long-term drought is expected to persist through the winter in many areas.

Seems the weather experts were right after all.

Winter rain outlook back on October 6, 2004.

Woman in the Picture

Forget Nurse Betty, meet Nurse Edith

Courtesy of LA Observed, we read that Edith Shain, the nurse in the famous VJ-Day photo, will be the grand marshal at the Canoga Park Memorial Day Parade this year. From today's L.A. Daily News:

The greatest kiss of all time was between a 27-year-old nurse and an unidentified sailor almost 60 years ago in Times Square on V-J Day, Aug. 15, 1945.

The Life magazine photograph of two strangers sharing a long, passionate kiss to mark the surrender of Japan and the end of World War II cut right to the war-weary heart of this nation.

Romance was back. It was time to celebrate and party again. The war was over and we had won. Anything and everything was possible. The proof was right there in Life magazine -- in that one passionate, unforgettable kiss.

"It's amazing how people still respond in awe to that photograph, especially when they find out I was the nurse," says 86-year-old Edith Shain, a retired Los Angeles Unified School District teacher who will be an honorary grand marshal in the Canoga Park Memorial Day Parade this year.

Genetics and Race in the TLS

When I apply to a United States government agency for a research grant, I’m asked to tick a box specifying whether I’m American Indian / Alaska Native, Asian, Black /African American, Native Hawaiian / Other Pacific Islander, or White. While there are people whose mixed ancestry puts them somewhere outside these boxes, in general one’s race – even as categorized on government forms – is self-evident. Nevertheless, immediately below the boxes there’s a disclaimer: “The categories in this classification are social-political constructs and should not be interpreted as being anthropological in nature”. On the basis of biological indicators such as ancestry or skin colour, I’ve specified my race, but now I’m told that all I’ve done is specified membership in a particular “social-political construct”.

Presumably lost on the bureaucrats who create such forms is the irony that America’s geneticists – experts on the biological basis of differences among groups – are being assured that the self-assessment they make on biological grounds is, in fact, not biological. This contradiction captures the tension beneath much of the current debate on race. It also highlights the peculiar American slant on this debate. While my colleagues in the United Kingdom must also specify their ancestry when applying for government funds, they are not subjected to double-speak disclaimers. Racism in the US, courtesy of a society built largely on slavery, has an especially long and sordid history, and remains a divisive hot-button issue. In many discussions of American social policy, racism is the proverbial elephant in the living room: recognized but carefully ignored.

Add These to the List

"The Manhattan Beach Project" by Peter Lefcourt (Stephanie Zacharek)

"Johnny Too Bad" by John Dufresne (Andrew O'Hehir)

"A Thread of Grace" by Mary Doria Russell (Laura Miller)

"How to Fall" by Edith Pearlman (Andrew O'Hehir)

"Enchantments" by Linda Ferr (Priya Jain)

Southern Culture in Flux

Beyond Confederate flags coming down from statehouses, more-mundane symbols are increasingly being questioned on the local level: in town halls, college campuses, and even cemetery committees. It's part of a deepening homogenization of Southern culture that's causing anger and resentment among many in a proud region with perhaps 65 million people who consider themselves Southerners.

Some observers see a note of irony in the growing suppression of conservative Southern memorials at a time when old Confederate values like militarism, chivalry, gentility, and religiosity are gaining political prominence. It's a lesson, they say, in how a rebellious American region maintains its influence beneath pressure to rescind its mottoes and murals.

"The shooting war is over, but ... we're engaged in a cultural war for the heart and soul of the South and for America, too," says William Lathem, spokesman for the Southern Heritage PAC in Atlanta.

The Literary Deconstruction of Wal-Mart

The apparent purpose of the speech was to counter political resistance to the building of Wal-Mart "supercenters" in California. But if Scott saw much danger that Wall Street might believe his rosy picture of labor relations, he wouldn't paint it, because that would create an investor stampede away from Wal-Mart stock. What we have, then, is a unique rhetorical form: Nonsense recited by someone who is relying on most of his listeners to understand that he is spouting nonsense. Wal-Mart took the trouble to send this speech out to writers "who are in a position to influence a lot of others," according to a cover e-mail I received from Mona Williams, Wal-Mart's vice president for corporate communications. I took Williams' email as a plea to expose the dishonesty in Scott's remarks (Stop us before we kill again!) disguised as a plea to give Scott's remarks a fair hearing. I will try to oblige.

L.A. Stories

And what became of the so-called Virgin Mary grilled cheese sandwich that, a few months ago, sold on eBay for $28,000 (even though the image looks more like a brunette Mae West)? It was purchased by online-gaming giant GoldenPalace.com and has become a mascot for the company’s popular eating contests. Last week, the company bought the similarly significant McDonald’s “Lincoln fry” — a 4-inch strip of fried potato that contains a partial bust of President Lincoln at one end — for $75,100.

“This is a great day for marketing,” GoldenPalace.com CEO Richard Rowe stated in the company’s press release. “And a great day for Abe Lincoln.”

A few days prior, on the afternoon of Abe Lincoln’s 196th birthday, an enthusiastic crowd of about 200 fills the bleachers at Muscle Beach to watch “The Passion of the Toast,” a 10-minute all-you-can-eat competition, and to get a glimpse of the Virgin Mary grilled cheese, which GoldenPalace.com’s Steve Baker carries around in a sturdy glass case.

Tell it to the Red Sox Fans

As well as providing succour for those troubled by the existential dilemma, religion, or at least a primitive spirituality, would have played another important role as human societies developed. By providing contexts for a moral code, religious beliefs encouraged bonding within groups, which in turn bolstered the group's chances of survival, says Pascal Boyer, an anthropologist turned psychologist at Washington University in St Louis, Missouri. Some believe that religion was so successful in improving group survival that a tendency to believe was positively selected for in our evolutionary history. Others maintain that religious belief is too modern to have made any difference.

"What I find more plausible is that rather than religion itself offering any advantage in evolutionary terms, it's a byproduct of other cognitive capacities we evolved, which did have advantages," says Boyer.

Wednesday, February 23, 2005

Mystery Feline Shot

Tiger Shot and Killed Near Reagan Library

By Amanda Covarrubias

Times Staff Writer

11:12 AM PST, February 23, 2005

An elusive tiger that prowled through Ventura County near the Ronald Reagan Presidential Library was killed this morning in Moorpark, but its origin remains a mystery.

The tiger, weighing between 400 to 600 pounds, was sighted walking behind the houses and through the ravines around Highway 23. Nearby is Miller Park, with well-used soccer fields.

According to Troy Swauger, a spokesman for the state Department of Fish and Game, the tiger was spotted about 6:30 a.m., walking through the yard of the Tucker family.

"It was old and tired-looking. It was walking along our fence and then went to our next-door neighbor's yard," Mary Tucker said.

"It was just a weird thing to see him in our backyard," husband Ken Tucker said.

Tuesday, February 22, 2005

When in Rome, Walk This Way

Walk Like a Man

When disembarking at the airport, put on your best New York scowl and act like you know what you're doing, even if you don't. Uncertain body language is like blood in the water for robbers, touts and con artists, all of whom lurk outside Customs like skycaps.

Come Prepared

Carry a pocketful of ballpoint pens. They make handy, non-monetary gifts for street children. Many schools in poor countries can't afford to give their students anything to write with.

An All-Purpose Conversation Starter in Youth Hostels

"Hey, are you from Australia?"

Two Drunks, a Bottle, Some Rifles....

In early August of 1990 I went to Aspen, Colo., to cover what looked as if it would be a rather banal summit involving Margaret Thatcher and George Bush. (The meeting was to be enlivened by the announcement of the forcible annexation of Kuwait by Saddam Hussein, who would go on to trouble our tranquility for another 13 years.) While the banal bit was still going on, the city invited the visiting press hacks for a cocktail reception at the top of an imposing mountain. Stepping off the ski lift, I was met by immaculate specimens of young American womanhood, holding silver trays and flashing perfect dentition. What would I like? I thought a gin and tonic would meet the case. "Sir, that would be inappropriate." In what respect? "At this altitude gin would be very much more toxic than at ground level." In that case, I said, make it a double.

Thanks for the Memorex

The papers and the talkers were abuzz with news of Doug Wead's tapes of George W. Bush over the weekend. There's plenty in there to discuss: Bush's admission – implicit but unequivocal – that he used marijuana in the past, his warning that Republicans shouldn't "kick gays," his prickliness about attacks perceived and real, and his ruminations about what a great Supreme Court justice John Ashcroft might make.

But the most interesting thing about the recorded conversations is that there's apparently a whole lot more of them still out there. Wead says that he didn't mean to harm the president by letting the New York Times listen to excerpts from the tapes. As proof, he points to the fact that he has refrained from releasing most of the tapes he has. "Ninety percent of the tapes have not been heard," Wead told the Washington Post. "If I released all the tapes, it would be an act of betrayal. Most of them have never seen the light of day and never will."

Wead's decision to hold back may explain the White House's muted response to the tapes so far. The Bush administration has never been shy about lashing out at those who embarrass the president. Just ask Paul O’Neill or Richard Clarke. But the White House has all but admitted the authenticity of Wead's recordings, and it has done little yet to discredit Wead himself. "The governor was having casual conversations with someone he believed was his friend," White House spokesman Trent Duffy told the Times.

Maybe the Bush team hopes that reacting quietly will help the story go away. But maybe it's something else. Maybe Bush figures that a man still sitting on a stack of tapes isn't a man he wants to hassle.Thursday, February 17, 2005

Suburban Dystopia - L.A. Style

Families have long flocked to this master-planned community 35 miles north of downtown Los Angeles because of the pristine parks and high-performing schools in the fervent belief it is a good place to raise children.

But some families who moved to the Santa Clarita Valley to escape the noise, crime and decay of the city are finding that life is not so comfortable in the manicured suburbs.

The valley has been roiled over the last few months by claims from at least half a dozen African American families that their children have been targets of intolerant, even racist, behavior from their white peers. They say the white teens have continually bullied, harassed and attacked their children at school and off campus for no apparent reason, other than the color of their skin.

The attacks, they said, occurred when youths were walking home from school, going to the park or visiting friends. The incidents have shaken the community because the alleged assailants are not skin-head outsiders but other teenagers who live among them in the pricey subdivisions.

Gannongate and "Real" News

By my count, "Jeff Gannon" is now at least the sixth "journalist" (four of whom have been unmasked so far this year) to have been a propagandist on the payroll of either the Bush administration or a barely arms-length ally like Talon News while simultaneously appearing in print or broadcast forums that purport to be real news. Of these six, two have been syndicated newspaper columnists paid by the Department of Health and Human Services to promote the administration's "marriage" initiatives. The other four have played real newsmen on TV. Before Mr. Guckert and Armstrong Williams, the talking head paid $240,000 by the Department of Education, there were Karen Ryan and Alberto Garcia. Let us not forget these pioneers - the Woodward and Bernstein of fake news. They starred in bogus reports ("In Washington, I'm Karen Ryan reporting," went the script) pretending to "sort through the details" of the administration's Medicare prescription-drug plan in 2004. Such "reports," some of which found their way into news packages distributed to local stations by CNN, appeared in more than 50 news broadcasts around the country and have now been deemed illegal "covert propaganda" by the Government Accountability Office.

And, in an even-more penetrating analysis, Rich reminds us about prescreened and scripted town hall meetings, faux "news" channels run by the Pentagon, and government "minders" for the Washington press corps.

It is a brilliant strategy. When the Bush administration isn't using taxpayers' money to buy its own fake news, it does everything it can to shut out and pillory real reporters who might tell Americans what is happening in what is, at least in theory, their own government. Paul Farhi of The Washington Post discovered that even at an inaugural ball he was assigned "minders" - attractive women who wouldn't give him their full names - to let the revelers know that Big Brother was watching should they be tempted to say anything remotely off message.

The inability of real journalists to penetrate this White House is not all the White House's fault. The errors of real news organizations have played perfectly into the administration's insidious efforts to blur the boundaries between the fake and the real and thereby demolish the whole notion that there could possibly be an objective and accurate free press. Conservatives, who supposedly deplore post-modernism, are now welcoming in a brave new world in which it's a given that there can be no empirical reality in news, only the reality you want to hear (or they want you to hear). The frequent fecklessness of the Beltway gang does little to penetrate this Washington smokescreen.

Last November, I listened to Terri Gross interview New Yorker writer Hendrik Hertzberg on Fresh Air. His observation - and a good one at that - was that the Bush administration's ethical tribulations up to that time mirrored those of the Nixon administration just prior to the 1972 election - only months before the Watergate break-in was splashed over the front page of the Washington Post. Gannongate, and other "suprises" released only after the election (i.e., FAA reports on Al-Qaeda ignored by Rice and others), might just be the beginning of something big and sordid.

Death Pays

Anyone who's had the pleasure of shopping for funeral services knows how expensive a funeral can be. Even a basic pine box seems to cost thousands of dollars. Now, I have a better understanding as to why. Last week, buried at the bottom of a press release on various management changes, funeral services giant Service Corp. International (SRV) noted that its vice chairman, B.D. Hunter, planned to step down, but would remain on as a consultant. What wasn't included in the press release (but was in the 8-K the company filed on Tuesday) was just how much Hunter will be paid: $91,667 a month in exchange for devoting "substantially his full time to the business of the company" (whatever that means). He'll collect that monthly stipend for the next three years, before the fee drops down to a measley $50K a month in February 2008.

Sympathy for the Vatican

The Vatican's exorcism rule book warns priests the devil "goes around like a roaring lion looking for souls to devour", but such is the perceived rise in his worship that a Catholic university is offering clerics classes to better identify their foe.

The two-month course at Regina Apostolorum, one of Rome's most prestigious pontifical universities, has arisen out of concern in the Vatican over a series of high profile court cases linking ritualised murders to Satanism.

The courses, starting today, will deal with demonology, the presence of the notion of the devil in sacred texts, and the pathology and medical treatment of people suffering from possession.

Art and the Bottom Line

After wading through stacks of economic and educational studies used to drum up arts funding, Rand Corp. researchers say the numbers don't make a persuasive case and that arts advocates should emphasize intrinsic benefits that make people cherish the arts.

The Rand report, "Gifts of the Muse: Reframing the Debate About the Benefits of the Arts," issued Tuesday, says that trumpeting the most quantifiable and utilitarian benefits doesn't address the biggest long-term challenge facing arts organizations: cultivating an arts-savvy public that wants what museums and performing groups offer.

To that end, Rand proposes that advocates become less fixated on what the arts can do for business growth and kids' math and reading scores, and instead stress intangibles such as enchantment, enlightenment and community-building.

Chabon in Denver

Chabon's talk—for which I would have suggested the title "The Importance of Lying, The Pitfalls of Memory," after a couple phrases he used—turned out to be about the genesis of his first novel, The Mysteries of Pittsburgh. (Unfortunately, this is the only Chabon novel I haven't read, and if I'd known, I would have read that instead of The Final Solution last weekend. But I digress.) He began the book while living with his mother and step-father in the summer of 1985, right before entering Cal-Irvine's MFA program. The first attempts were pecked out in a humble little bunker he called "Ralph's room," after the previous occupant—he worked at an extremely high workbench, so he had to sit in a folding chair precariously perched on a steamer trunk, and composed on an Osborne 1a computer that he bought in 1983. The room smelled of "soil, coal dust, and bicycle grease." He was living somewhat under a cloud at that time, and described his skull that summer as full of "loneliness, homesickness, and women in short pants."

Wednesday, February 16, 2005

Canadian Counter-Culture Consumers, and More

The fact that the authors admit that, in their, uh, advanced 30-something age, they were duped by the countercultural rallying cries of their youth leads them to conclude: “The rebellion against aesthetic norms is not actually subversive.” It’s a thesis certain to enrage. In fact, it already has — but not in the U.S. (at least not so far). Reaction to the book in Canada, where it goes under the somewhat more strident title of The Rebel Sell, is described by Potter as “uniformly bad” — and not because they tweak Michael Moore and their fellow Canuck, Culture Jam author Kalle Lasn, for advocating an uncompromising (and unrealistic) “smash the marketplace” attitude over less-sexy changes in institutional laws. Heath and Potter recently appeared at a lecture at the University of British Columbia in Vancouver, chiding their countrymen good-naturedly for their organic-vegetable obsession, which they cast as “a remnant of ’60s technophobia” and a misguided attempt at anti-corporate “ethical consumption” — not to mention a bit smug and aristocratic. Heath and Potter maintained that growing them or buying them does not really strike a blow against consumerism; it just creates a market for more expensive vegetables — thus exacerbating competitive consumption rather than reducing it.

Turns out the Vancouverites didn’t like that one bit. “The very idea of organic vegetables as ‘yuppie food’ generated more heat in Vancouver than it did the U.S.,” laughs Andrew Potter, referring to this kind of countercultural obsession with “micro-issues” that take attention away from simpler solutions — namely, separating politics from the counterculture entirely. “We argue that ethical consumption is not a substitute for political action,” he says. “Only legislative action is.”

Amateur Anthropology

In an interview in 2000, Eva Hoffman observed, “I think every immigrant becomes a kind of amateur anthropologist—you do notice things about the culture or the world that you come into that people who grow up in it, who are very embedded in it, simply don't notice. I think we all know it from going to a foreign place. And at first you notice the surface things, the surface differences. And gradually you start noticing the deeper differences. And very gradually you start with understanding the inner life of the culture, the life of those both large and very intimate values. It was a surprisingly long process is what I can say.” A process that seems not to have an end point, as the conversation below exhibits.

Tuesday, February 15, 2005

American Dialectics

The foray of mental diviners - scientific or not - into the national character is as old as the republic, and I would have been interested in an argument which included sound extrapolation from national data. But, not surprisingly, Gartner falls back on the rhetorical toolbox of psychotherapy: extrapolate from the one, or the few, to the millions:

Gartner sets out to prove his case not through contemporary case studies or the aggregation of vast quantities of data, but through brief, lively studies of key hypomanics from five different centuries.

But shortly thereafter, Gross gets to the real point which caught my attention:

It's a fun read. But Gartner's diagnosis overlooks the more rational factors that were crucial to the settling of America and the construction of our unique economic and business culture. The British Protestants who crossed the Atlantic Ocean in the 17th century came for God, but they also came for the cod. And the timber and the tobacco. By the time John Winthrop arrived in Massachusetts, non-dissenting settlers—economic opportunists, not prophets—had been farming and trading in Jamestown, Va., for more than 20 years.

Immigrants like Hamilton, Carnegie, and David Selznick's parents may have been hypomanic. But whether you were a landless peasant in Ireland in the 1840s, a Jewish cobbler in Russia in 1910, or an Indian computer programmer in the 1980s, the decision to move to America made profound economic sense. America had cheap land in abundance. The opportunities—if occasionally overblown—were real. So was the infrastructure that provided for the rule of law, capital markets, and the protections of minority rights.

In fact, practicality and realism have coexisted with messianism and utopianism in the American experiment from the very beginning. Benjamin Franklin was almost certainly hypomanic by Gartner's reckoning, but he was also one of the most relentlessly practical Americans of the 18th century. The U.S. economy has been distinguished by hypomanic booms and busts and by the creative destruction that lies at the heart of entrepreneurial capitalism. But it's also distinguished by durable systems and institutions that are emblematic of our distinct style of managerial capitalism—the Federal Reserve and the New York Stock Exchange, our telecommunications networks, and Procter & Gamble. Such institutions are not the work of flamboyant geniuses but of tons of thoughtful, far-sighted, and average Americans.

Juxtaposing "practicality and realism" with other seemingly contradictory values allows Gross to argue that one such element cannot be wholly explanatory (contra Gartner, and contra psychological reductionism of social and cultural phenomena) but, rather, value systems which, he reminds us, have "coexisted...in the American experiment from the very beginning." This point of view has been explored not only by social philosophers, but more recently anthropologists. Some weeks ago I had the opportunity to revisit Charles W. Nuckolls' enlightening (and readable) work "Culture: A Problem That Cannot Be Solved" (Wisconsin, 1998), which takes this finding to the heart of his argument: many cultural systems of knowledge are rooted in dialectical relationships between opposing values, and must be understood and explained with regard to this ongoing tension. I recommend it to those who might be interested in the topic, if not those who are predisposed to simplistic, modal explanations of American values. [Nuckolls examined three cases for his argument: cooperation and self-gratification among the Ifaluk in Micronesia; community and individualism in Oklahoma; and opposition within disorder characteristics in American psychiatry.] The following from his preface is a brief description of the argument:

The conclusion of this book is that culture is a problem that cannot be solved, and thus it contradicts the view that culture is a solution to existential problems that all human beings share. This is a statement of an extremely one-sided position, and is probably wrong, but the purpose of the argument is to show that extreme positions are productive when they exist in unrelieved tension with their opposites. This is as true of the book as it is of its ethnographic subjects.

As an example, consider the topic that informs much of the book: the conflict between American values of independence and dependence, or individualism and collectivism. Should we be ruggedly individualistic and cooperate only to the extent that it serves our individual interests? Or should we construct social institutions on the model of the family and form intense and long-lasting dependency relations that are not contingent on calculations of personal gratificiation? Some, like Tocqueville, argue that the conflict between these two positions defines American culture, but whether that is true or not, the real question is this: Can a culture be understood as an opposition between equally desireable alternatives, such that cultural knowledge systems come into being as partial (and necessarily temporal) solutions to problems that can never be resolved?

Certainly, no one expects the conflict between American individualist and communitarian perspectives to be resolved. But in the absence of resolution, the attempt to satisfy opposing ideals shapes the development of knowledge in disparate domains, from political parties (Republican versus Democratic) to the construction of gender (male versus female) to psychiatric categories (antisocial versus histrionic). Americans work through the dialectics constituted by opposing values. In the process they make up whole knowledge systems - systems that develop in pursuit of a goal that can never be reached, to solve a problem that can never be solved. (Pp. xv-xvi)

Monday, February 14, 2005

Is It Bull?

The essay on [bull] arose from that kind of struggle. In 1986, Mr. Frankfurt was teaching at Yale, where he took part in a weekly seminar. The idea was to get people of various disciplines to listen to a paper written by one of their number, after which everyone would talk about it over lunch.

Mr. Frankfurt decided his contribution would be a paper on [bull]. "I had always been concerned about the importance of truth," he recalled, "the way in which truth is foundational to civilization and the various deformities of it that were current."

"I'd been concerned about the prevalence" of [bull], he continued, "and the lack of concern for truth and respect for truth that it represented."

"I used the title I did," he added, "because I wanted to talk about [bull] without any [bull], so I didn't use 'humbug' or 'bunkum.' "

Research was a problem. The closest analogue came from Socrates.

Rumor is, Frankfurt will soon be a celebrity guest on South Park.Thursday, February 10, 2005

Dirty Harry - "Pinko" American Movie Star

But the most unintentionally revealing attacks on "Million Dollar Baby" have less to do with the "right to die" anyway than with the film's advertising campaign. It's "the 'million-dollar' lie," wrote one conservative commentator, Debbie Schlussel, saying that the film's promotion promises " 'Rocky' in a sports bra" while delivering a "left-wing diatribe" indistinguishable from the message sent by the Nazis when they "murdered the handicapped and infirm." Mr. Medved concurs. "They can't sell this thing honestly," he has said, so "it's being marketed as a movie all about the triumph of a plucky female boxer." The only problem with this charge is that it, too, is false. As Mr. Eastwood notes, the film's dark, even grim poster is "somewhat noiresque" and there's "nobody laughing and smiling and being real plucky" in a trailer that shows "triumph and struggles" alike.

What really makes these critics hate "Million Dollar Baby" is not its supposedly radical politics - which are nonexistent - but its lack of sentimentality. It is, indeed, no "Rocky," and in our America that departure from the norm is itself a form of cultural radicalism. Always a sentimental country, we're now living fulltime in the bathosphere. Our 24/7 news culture sees even a human disaster like the tsunami in Asia as a chance for inspirational uplift, for "incredible stories of lives saved in near-miraculous fashion," to quote NBC's Brian Williams. (The nonmiraculous stories are already forgotten, now that the media carnival has moved on.) Our political culture offers such phony tableaus as a bipartisan kiss between the president and Joe Lieberman at the State of the Union, not to mention the promise that a long-term war can be fought without having to endure any shared sacrifice or even too many graphic reminders of its human cost.

Rich is really correct to comment on the saccharine quality of American sentimentality (the British version might be called "treacle"). We have drifted ever-more into an Oprah-like treatment of emotion, where even the most inner thoughts and feelings have to be packaged in a consumer-friendly format, and dragged out into the camera lights of morning network shows. Where is the privacy? Where is the personal reflection without need for public display?

What makes some feel betrayed and angry after seeing "Million Dollar Baby" is exactly what makes many more stop and think: one of Hollywood's most durable cowboys is saying that it's not always morning in America, and that it may take more than faith to get us through the night.

Innumerants on Parade

When his wife died and he decided in 2000 to move from his $800,000 Manhattan Beach home, Waldrep devised a plan to offer the dwelling as an essay contest prize instead of selling it.

Entrants would pay a $195 fee to participate in the writing competition. Waldrep pledged that 10% of the entry fees would be donated to a local charity that had assisted his dying wife.

But when the contest ended and Waldrep didn't move out of his house, one unhappy essay writer filed a class-action civil lawsuit alleging that the competition was fixed. Last week, a Los Angeles jury decided Waldrep had committed fraud and ordered him to return the entry fees, plus interest, to the writers.

It was when the jury returned Monday to award punitive damages to the writers that the plot thickened.

Jurors agreed that the contestants should additionally split between them the approximately $1 million for which Waldrep sold the house last year. But in a mix-up, jurors inadvertently awarded the 1,812 essayists $1 million each.

Jurors discovered their $1.8-billion mistake while chatting with lawyers after the trial. When they attempted to return to the courtroom of Superior Court Judge Andria Richey to rectify things, they learned they were too late: They had been dismissed.

Selling First Contact

Like many of you, I awoke this morning to NPR's interview with Michael Behar on his article in the February issue of Outside Magazine. Tourism, and especially armchair touristic journalism, is reaching out to increasingly more remote locales (think space tourism) and, not surprisingly, more unbelievable tourist experiences. Behar plunked down $8,000 USD to American-born guide Kelly Woolford (a self-described "hillbilly") to take him into the jungles of West Papua, Indonesia and make "first contact" with a group of natives.

After almost spitting out my morning cup of coffee, and shaking my fist in a Basil Fawlty-like repose, my impression were: (1) What "uncontacted" people? Most anthropologists agree that even remote hunter-gatherer groups have trading ties with surrounding societies and were therefore "contacted" a very long time ago. (2) Behar could have commented more on the doubts most anthropologists have about this type of expedition, and his research. (3) If it smells like a rotten egg, it probably is. Well-meaning people have been duped before into thinking they made the mythical "first contact" with a remote human group. In the 1970s the Tasaday in the Philippines were called an "untouched stone age people," a discovery which was later shown to be a hoax.

I was glad to see, however, that in his article Behar goes into more detail about the reactions of anthropologists he consulted after his trip, most of whom were quick to dispell any romantic notions of a human group unblemished by the sins of modernity:

[West Papuan] tribes are not uncontacted in any absolute sense," argues Paul Michael Taylor, an anthropologist and curator at the Smithsonian National Museum of Natural History, in Washington, D.C. "They've been trading crocodiles and bird of paradise feathers and have had access to metals and tobacco for a long time. So they've always been in contact; it's just a question of degree."Before leaving Nabire, Woolford agrees that his definition of first contact may need to be modified. Should we actually encounter a tribe in the jungle, he says, it might be impossible to determine whether they have ever set eyes on an outsider.

Behar then describes in detail the suprise contact with bow-and-arrow wielding natives, sending his scurrying to the river bank. Upon his return, these "pristine natives" are mugging for a camera shot with another tourist.

Two weeks later, back home in Virginia, I send three hours of our video footage to several anthropologists familiar with West Papuan tribes. None of them is convinced by it.

"I'm 95 percent sure it is a hoax," the University of Sydney's William Foley declares after watching it. He's struck by the fact that the natives didn't appear to have any skin diseases, which are endemic among bushmen. "This is unheard of for people living in the forest," he says. "The guys are too clean. Secondly, their dress is far too elaborate. That's the kind of dress they wear when doing a ceremony. That's not what they wear when they go out hunting and collecting food. All those headdresses—no way."

Other anthropologists have similar reactions. Paul Taylor, at the Smithsonian, adds that it wouldn't be too difficult to hire local villagers to stash their Western garb and then pretend to be "discovered" as Woolford's clients plod through the jungle. "The big question in my mind," says Taylor, "is whether this is something he's paid these people to do over and over again." Whatever is going on, Taylor doesn't like it. "If it's not a first-contact situation, then it's fraudulent. And if it is a first-contact situation, then it's an insensitive way to go about it."

When confronted with these refutations, Woolford reacts like the miner making a living on his claim who has been told by the professional geologist that his rocks are fool's gold, and not the real thing.

Woolford, for his part, fires right back when I run the anthropologists' remarks by him, starting with a comment that anyone who doubts his word should come along on a trip. "Some of these people are just lecturers at nice universities who have tenure and cushy jobs," he says. "If they think I've staged this, then come with me. I give them an open invitation to see for themselves. They can feel the energy of these guys, see them run around, see them barreling down and pointing arrows at them."

Really? Most researchers who study hunter-gatherers live in the field for a year or more for their doctoral research, continue to go back for summer or semester-long trips, and sometimes chock up another year's worth of research during a sabbatical. All this for a pathetically-weak job market, and lower pay than many other professions, so I am not taken in by his characterization that his critics are "lecturers at nice universities who have tenure and cushy jobs." I can hear ad hominem retorts like that on Fox News. Woolford is an entrepreneur, and is selling this experience to those with the money, so naturally he has to "find" something for them to see, making me even more suspicious of his motives. Read the article yourself, but I think you will come away with a similiar reading.

"People pay a lot of money for this trip, and I want to try to find them something," he says. "But locating new tribes is getting harder and harder—and who knows what you are going to come across, if anything."

Wednesday, February 09, 2005

Payback!

For Fiorina, a marketing whiz at Lucent in the go-go 1990s, the CEO job was all about sales—selling employees on the merger with Compaq, customers on the products, investors on the stock, the board on her strategy, and the media on her. (Adam Lashinsky's 2002 Fortune article nicely captures her style.) But the controversial Compaq merger, which lashed HP's highly profitable printing business more tightly to a commodity PC business, didn't produce the synergies and higher profits she promised.

Over time, the gap between her polished rhetoric and the mediocre results grew wider. In July 2004, as Carol Loomis wrote in an excellent Fortune takeout, Fiorina gave a "totally upbeat speech at Allen & Co.'s big Sun Valley conference." A few weeks later, the company announced yet another disappointing quarter. While audiences of media moguls lapped up her speeches, the audiences that mattered most weren't buying what she was selling. The stock bumped along. Fiorina and her team didn't believe their own spiel, to judge by their actions. This record of insider transactions suggests that in the last two years instances in which directors or top executives dug into their own pockets to make even symbolic purchases of shares in the open market were few and far between.

Perhaps insiders and professional investors sensed that Fiorina was distracted. CEOs justify the money, resources, and time spent going to Davos, serving on corporate boards and chairing presidential commissions as ways to schmooze customers, network, and plant the corporate flag. But really, they're more about building a personal brand than a corporate brand. Fiorina's globe-trotting may have sent not-so-subtle messages to investors and her board that her heart wasn't in HP.

McEwan in the TLS

Narrative tension is an absolutely fundamental part of McEwan’s technique. The stop-start method – whereby he announces or hints that something terrible will happen, and then delays disclosure – is one of his most characteristic manoeuvres. It certainly works, driving the plot forward and ensuring the reader’s close attention. But sometimes it seems that suspense is used to mask some fundamental thinness. Certainly, rereading the final section without the benefit of adrenalin, it seems much less good, and much less interesting than the rest of the novel. Thematically, it doesn’t provide a close fit with what precedes it, except in the general sense that it is about security – the earlier nuances about the balance of threat and paranoia are lost. And, as in any television drama where the hero’s family is threatened, it provides a fairly generic catharsis: with the dream of righteous violence used against the invader. Again, you sense the unresolved conflict between nightmare and reason. The kinky cruelty of the early stories and novellas is still lurking, and the grown-up McEwan, rational and concerned, doesn’t quite know how to exorcize it. As a result, there are thematic resolutions which seem forced, episodes and ethical dilemmas which are not quite believable: a violent stand-off, for instance, in which a reading of Matthew Arnold’s poem “Dover Beach” plays a pivotal role . . . .

All Carrot, No Stick

Lawmakers backing the "homeland reinvestment" provision last year touted the idea of lower tax rates as a way to encourage companies to repatriate their earnings, and by putting extra money into corporate coffers, the thought was that companies would increase operating and investment activity and add jobs to payrolls.

Yet the devil in all this comes in the details. When it comes to the specifics in how the repatriated funds should be used, it turns out that what is considered permissible probably won't drive too many upswings in employment at all, and in some cases, could even spur layoffs.

In fact, a Morgan Stanley survey that was released late last year found that none of the investment firm's analysts believed that any of the companies they followed would use the repatriated earnings for hiring.

Five Pennies

Why is stealing nickels and pennies such a bad deal? In the 1790s, the first U.S. coins were produced in proportion to a silver dollar (which was in turn based on the Spanish 8 Reales—the first dollars contained the same amount of silver as the then-common Spanish coin). The 50-cent piece had half as much silver as the dollar by weight, the quarter had one-fourth, the dime had one-tenth, and the tiny half-dime (or 5-cent piece), had one-twentieth. Only the copper penny was fiduciary—its worth was not tied to the value of the metal it was made of.

Thanks to the lobbying efforts of nickel-industry magnate and business-school founder Joseph Wharton, the composition of fiduciary coins changed in the mid-19th century. In 1857, the old, large copper pennies were replaced with smaller "1-cent nickels" made of a nickel alloy. Soon after, the U.S. Mint issued 3-cent nickels of similar material. And in 1866, the small and impractical half-dime was made fiduciary too, and the mint released a 5-cent nickel. At the same time, the 1-cent nickel was converted to bronze, and a new, bronze 2-cent piece was created. These bronze pieces were heavier than their nickel counterparts, so from the very beginning, 45,000 pounds of 5-cent nickels would have been more valuable than 45,000 pounds of pennies.

Dismal Science, Dismal Fieldwork

But I was not interviewing just for information. I was trying to get people into the state of mind that they would read my final report when I wrote it, and read it appreciatively. I was trying to show them that I was highly intelligent and that I understood what was really going on in the country. I did this by keeping my mouth shut, listening carefully and respectfully to what they said, and writing it down.

At the same time I was collecting reports and statistics. My experience of other countries was that there should be dozens of highly relevant reports, but I could only find a handful here. The statistics were appalling. For example, there were two statistical studies on food production. They disagreed by 80% on the total area planted to rice, and by 60% on the yield per hectare even though they used the identical methodology. There were no reliable figures at all on most of the economy.

I had to resort to detective work. Making sense of the statistical and other information was rather like doing a crossword puzzle. No bit of information had any credibility until there were several cross confirmations. Even then, the credibility grew as the cross confirmations were themselves confirmed by down confirmations. Some of the key information turned out to be things like seeing Japanese rice on sale in a village market, and asking a stevedore how much rice had been unloaded from a ship.

By the end of the first month I felt I was the only person who had a broad picture of the food situation in the country, though there were still lots of gaps and loose ends. I had talked to people from the Minister down. I had talked to people who were experts in different parts of the market. I had any statistics that were available. Everything was starting to come into a coherent model.

Congratulations Peter. You've just made the leap into primary data gathering. Now if only other economists could follow suit. Economic anthropologists have all sorts of horror stories about meeting consultants and econ-trained officials who make short trips to the "country" to take a look around, prefering to hire a bunch of assistants to administer some surveys while they talk to officals back in the capital. To his credit Griffith sounds like an economist who has reflected on the real world application of his profession and, in the above passage, the basics of fieldwork in developing nations.

Tuesday, February 08, 2005

Academic Online Research & Reading Fun

Our Latest Issue

Volume 10, Issue 2, January 2005

Gender, Identity, and Language Use in Teenage BlogsDavid Huffaker & Sandra Calvert

The Language of Online Intercultural Community FormationJustine Cassell & Dona Tversky

Hazing as a Process of Boundary Maintenance in an Online Community Courtenay Honeycutt

Mechanisms of an Online Public Sphere: The Website SlashdotNathaniel Poor

The Role of the Habitus in Shaping Discourses about the Digital Divide Lynette Kvasny

Experienced Presence within Computer-Mediated Communications: Initial Explorations on the Effects of Gender with Respect to Empathy and Immersion Stef G. Nicovich, Gregory W. Boller, & T. Bettina Cornwell

A Content Analytic Comparison of Learning Processes in Online and Face-to-Face Case Study Discussions Robert Heckman & Hala Annabi

The Media Downing of Pierre Salinger: Journalistic Mistrust of the Internet as a News Source Thomas E. Ruggiero & Samuel P. Winch

Congress on the Internet: Messages on the Homepages of the U.S. House of Representatives, 1996 and 2001 Sharon E. Jarvis & Kristen Wilkerson

The Italian Extreme Right On-line Network: An Exploratory Study Using an Integrated Social Network Analysis and Content Analysis Approach Luca Tateo

Research Brief

The Story of Subject Naught: A Cautionary but Optimistic Tale of Internet Survey Research Joseph A. Konstan, B. R. Simon Rosser, Michael W. Ross, Jeffrey Stanton, & Weston M. Edwards

Reactions? While I'm not a big fan of many communication research projects (institutional allegiances), I do use social network analysis, some content analysis, and conceptual mapping from cognitive anthropology. But the brevity of some of these projects makes me think of their place within the larger context of academic publishing and Darwinian survivial tactics in the Ivory Tower. The famous Red Queen Hypothesis proposed by Van Valen in evolutionary biology held that species had to "run in place" in order to stave off extinction, and it seems that an analogy might be made for certain research projects in certain academic fields.

Authenticity and Design

No one loves authenticity like a graphic designer. And no one is quite as good at simluating it. Recently on Speak Up, Marian Bantjes described the professional pride she took in forging a parking permit for a friend. "And I have to say," she admitted, "that it is one of the most satisfying design tasks I have ever undertaken." This provoked an outpouring of confessions from other designers who gleefully described concocting driver's licenses, report cards, concert tickets and even currency.

Every piece of graphic design is, in part or in whole, a forgery. I remember the first time I assembled a prototype for presentation to a client: a two-color business card, 10-point PMS Warm Red Univers on ivory Mohawk Superfine. The half-day process involved would be incomprehensible to a young designer working in a modern studio today; with its cutting, pasting, spraying, stirring and rubbing, it was more like making a pineapple upside-down cake from scratch. But what satisfaction I took in the final result. It was like magic: it looked real. No wonder my favorite character in The Great Escape wasn't the incredibly cool Steve McQueen, but the bewhiskered and bespeckled Donald Pleasence, who couldn't ride a stolen motorcycle behind enemy lines, but could make an imitation German passport capable of fooling the sharpest eyes in the Gestapo.

And the illusion works on yet another level. Consider: that business card was for a start-up business that until that moment had no existence outside of a three-page business plan and the rich fantasy life of its would-be founder. My prototype business card brought those fantasies to life. And reproduced en masse and handed with confidence to potential investors, it ultimately helped make the fantasy a reality. Graphic design is the fiction that anticipates the fact.

Walk This Way

3) Tell us about the models. Where do the models learn to walk? Does their agency teach them? And why do they walk like that? Why can't they just walk normally? Do the models really not eat? Do they diet before the shows?

You may have seen Jay Alexander, who works with Elite Plus in Paris, on America's Top Model. He's famous for teaching models to walk and is rumored to make quite a bundle doing so. But not every model takes walking lessons; some have a natural sense of presentation. "It depends on where the models are from," according to Andrew Weir, a New York casting director. "If they're from Brazil or South America, the walk is innate. The other girls have to watch the Brazilians for a season or two until they catch up."

There are a few walking styles. "If you say to the girls 'Do a Versace walk,' they know what that means—a va-va-voom, shake-it-like-you-might-break-it walk," says Weir. " 'Street' means no swish. It's strong, like the way people walk down a New York street." Most shows now use a near-natural street walk, described by Weir as " 'Street' plus a little bit more." That means a pretty natural stride with no hands on hips or posing. But the walk is still slightly exaggerated: Some extra swagger makes skirts swish dramatically and gives tailored looks a bit of extra power.

Although people don't like to believe it, models are not big dieters. They are blessed with fast metabolisms.

I still don't believe it.

Monday, February 07, 2005

Comfort Food in Iraq

Pass the ammunition and a slice of pizza. U.S. soldiers in Iraq spend hours -- sometimes days -- on patrol hunting insurgents and dodging roadside bombs. But when they get back to base, they can pick up a case of Dr. Pepper, buy the latest DVD and take a Pizza Hut meal back to the room to relax after a hard day at war.

A soldier's life isn't what it used to be.

Commanders say providing a good quality of life is essential to keeping volunteer troops in the military. Having a chance to skip the mess hall and go to Pizza Hut, Burger King or Subway -- Popeye's Fried Chicken and Taco Bell will be added this month -- makes a big difference, soldiers say.

"I think it's great. The dining facility gets old after a while," said Spc. William Oates, 25, a 1st Cavalry Division soldier from Asheboro, N.C., after finishing a Whopper at Camp Liberty, just outside Baghdad.

The Army & Air Force Exchange Service operates 23 fast food franchises at 16 U.S. bases in Iraq, with 25 more approved and under construction. They also have Seattle's Best and Green Beans coffee shops.

Terry McCoy, the food program manager for Iraq, opened the first Burger King at Baghdad's airport in May 2003, before the military even set up its first mess hall.

"This generation of soldiers has grown up with name-brand fast food," McCoy said. "It's the taste from home that they're missing. It not only gives them that little moment of comfort, I like to think it ... takes them back home for just the 15 minutes they are enjoying a Whopper."

Bubble World

And people get so sucked in and preoccupied that they don't even put down their cell phones when being helped by store clerks; headphones stay clamped on a head that continues to bop to whatever song is too good to pause for the sake of, um, common courtesy.

An interesting side effect to being plugged into one's own private universe while operating in the outside world is feeling excessively at ease. This makes people shift into at-home behavior -- the shoes might come off, a nose might get picked, a voice might be raised. When one is in the throws of rapping along to "99 Problems" or perhaps trying to download Seahawks scores on one's Blackberry, the distinction between the private self and public self is blurred. We are transported to our homes, where we surround ourselves with the things that relax us (video games, the sports section, music, etc.).

I recall taking the train to Vancouver a couple of years ago, and even as recently as then, there was much more chit-chat between strangers than there is now. The train ride north this Christmas proved to be very quiet, but for a few small children who weren't plugged into any electronics. This eliminates the charm of being on a trip, the chance meetings we have with each other, the jokes we overhear, the serendipitous connections that make leaving one's home and going outside worth it. It wipes off all social fingerprints from ourselves, leaving us untouched and alone.

I had a similar experience some years ago at the Van Gough/Gaughin: The Studio of the South exhibit at the Art Institute of Chicago in 2002. Most of the crowd splurged for the self-guided audio tour, which meant that as one of the few without a headset, I viewed the exhibit with a small but constant buzz of commentary in the background. I could tell when the tape asked the patrons to move to the next picture because dozens of heads moved to the next picture in choreographed unison and everyone shuffled over, like a pack of the worst foreign tourists you've ever seen. No chatter among the viewers, no agreement, and no discord, making me feel as though I had missed some of the "social fingerprints" that tend to accompany anticipated art events.

Back to the Future Monday

I have little doubt that one of my former Nixon White House colleagues is history's best-known anonymous source — Deep Throat. But I'll be damned if I can figure out exactly which one.

We'll all know one day very soon, however. Bob Woodward, a reporter on the team that covered the Watergate story, has advised his executive editor at the Washington Post that Throat is ill. And Ben Bradlee, former executive editor of the Post and one of the few people to whom Woodward confided his source's identity, has publicly acknowledged that he has written Throat's obituary.

Last week All the President's Men was on cable, so I wonder if Deep Throat will actually look anything close to Hal Holbrook in the 1976 movie classic. It was a fun time-trip to see working typewriters with carbon paper, wide ties, rotary telephones, bad floral-pattern blouses that look like they were meant to match the wallpaper, and endless bouts of smoking.

But when I mentioned this to a colleague at the office, she immediately recalled the "Watergate cake." What!? I hadn't realized that a culinary offering was associated with that politically infamous episode. So, for those of you who might be interested (as I was) here is the Watergate Cake recipe:

1 pkg white cake mix

3/4 cup vegetable oil

3 large eggs

1 cup 7-up or club soda

1 1 (3 oz) pistachio instant pudding

1 cup chopped nuts (pecans are the best)

1/2 cup coconut

COVER-UP ICING:

6 oz whipped topping mix (dry) (2 envelopes)

1 1/2 cups milk

1 (3 oz) ackage pistachio instant pudding

1/2 cup coconut

3/4 cup chopped nuts (use pecans if that is what you used in the cake. match the nuts)

Combine the ingredients in the order given, blending well after each addition. Pour into a 13x9-inch pan and bake in a preheated 350 degree F. oven for 35 to 45 minutes, or until the cake tests done.

ICING: Combine the topping mix, milk and pudding. Beat until thick. Spread on the cake (the icing will be a light green color). Sprinkle with the coconut and chopped nuts.

Friday, February 04, 2005

Ernst Mayr

Dr. Ernst Mayr, the leading evolutionary biologist of the 20th century, died on Thursday in Bedford, Mass. He was 100.

Dr. Mayr's death, in a retirement community where he had lived since 1997, was announced by his family and Harvard, where he was a faculty member for many years.

He was known as an architect of the evolutionary or modern synthesis, an intellectual watershed when modern evolutionary biology was born. The synthesis, which has been described by Dr. Stephen Jay Gould of Harvard as "one of the half-dozen major scientific achievements in our century," revived Darwin's theories of evolution and reconciled them with new findings in laboratory genetics and in field work on animal populations and diversity.

One of Dr. Mayr's most significant contributions was his persuasive argument for the role of geography in the origin of new species, an idea that has won virtually universal acceptance among evolutionary theorists. He also established a philosophy of biology and founded the field of the history of biology.

"He was the Darwin of the 20th century, the defender of the faith," said Dr. Vassiliki Betty Smocovitis, a historian of science at the University of Florida in Gainesville.

Bad News Friday

The seventh annual State of the Region report by the Southern California Association of Governments ranks the quality of life in the region as a D-plus --potentially failing.

Housing and air quality worsened in 2003, while the grades for traffic, education, household income and public safety remained static. And while the number of jobs in the six-county region rose by 14,000, personal income for its 17.7 million residents stayed flat.

"The fundamental issue this region faces ... is the income issue. Without an increase in wages and per capita income we're not going to have the resources to deal with these issues," said Mark Pisano, executive director of SCAG.

The report details a slate of interconnected problems plaguing Southern California.

Students perform below the national median on reading and math test scores, while 76 percent of residents do not have a college degree -- elements that limit their ability to get high-paying jobs.

Meanwhile, an exodus of well-paying manufacturing jobs to less-expensive areas have been replaced by lower-paying service jobs. With less wealth, residents have to travel to far-flung suburbs to be able to afford a home, which then worsens congestion and pollution.

And on the national level, the latest jobs report shows a slight increase in overall employment from 2001, but a closer look reveals that while the unemployment rate fell, the actual number of people in the labor market is down by 3.4 million! (From the Economic Policy Institute)

The nation’s payrolls increased by 146,000 last month, and the unemployment rate fell to 5.2%, its lowest level since September 2001, according to today’s report from the Bureau of Labor Statistics.

The decline in the unemployment rate was, however, due to a fall in the labor force participation rate (LFPR) from 66.0% to 65.8%, the lowest LFPR since May 1988 and 1.5 percentage points below its most recent peak in April 2000.

Given today’s adult population, this translates into 3.4 million fewer persons in the job market. Since only active jobseekers are counted in the official unemployment rate, this long slide in the LFPR has artificially depressed the jobless rate, which would be higher if some of those who left the job market were actively looking for work.

As of last month, payroll levels have finally surpassed their pre-recession peak. In February 2001—the month before the recession was declared to have begun—payrolls stood at 132,546,000. Thanks in part to an addition of 203,000 jobs as part of the BLS annual revisions, payrolls stood at 132,573,000 last month, 27,000 jobs above the last peak. (Note, however, that this is due to the growth of government employment; private sector employment remains 703,000 jobs below its pre-recession level).